Chirps, howls, clicks, whistles and the pulsed calls of whales — even the screech of a bat may sound like nothing more than noise to many people. However, for scientists studying the communication of different species, those noises are a complex language, more diverse than previously thought. Now, by harnessing the power of artificial intelligence combined with recording devices, researchers believe they’re beginning to uncover the hidden language of nature.

Scientific American reported that scientists are using AI to make breakthrough discoveries ranging from the intricate language of bats to the underwater frequencies of whales.

According to scientists at the World Economic Forum (WEF), the method of decoding the languages involves a dataset of bioacoustics, which record the sounds of individual organisms, and ecoacoustics, which record the sounds of entire ecosystems.

The system, known as BEANS (Benchmark of Animal Sounds), uses 10 datasets of different animal communications then creates a criterion for what’s known as “machine learning classification and detection performance.”

The AI system is likened to Google Translate by a scientist involved in the study. The AI is then used to decipher what animals are “saying” to each other through the recorded sounds.

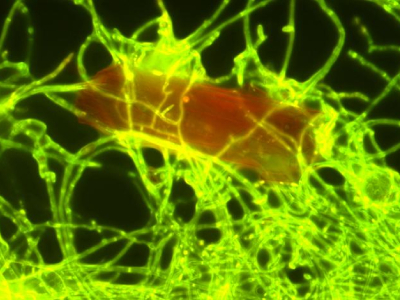

Scientists said that AI is creating an understanding of non-human species on a deeper level than ever before. According to Scientific American, honeybees communicate through vibrations in their wings and the positions of their bodies, so even the angle of the sunlight projecting onto them can send a message to the colony.

Scientists also discovered that bat mothers “speak” differently to their babies than they do to their adult counterparts. This elicits a “babble-like” response from their young, in the process teaching them the language of their species.

The BBC shadowed the scientists studying the communication methods of a bat colony in Tel Aviv, Israel. The scientists used audio, video and AI to analyze the noises of the bats.

“We are teaching the computer to define between the different sounds and recognize what each sound means when we hear it,” said Adi Rachum, a researcher with Tel Aviv University.

Vice News reported that researchers have also discovered previously unknown elements of whale vocalizations using AI. Scientists found that sperm whales use sounds similar to vowels in human language to communicate.

Researchers created an AI system that was trained to imitate sperm whale noises and “embed information into these vocalizations.” The AI predicted elements of the whale vocalizations already believed to be important, like clicks, but also isolated acoustic sounds.

To test the AI prediction, researchers took nearly 4,000 sperm whale acoustics known as codas which the mammal uses to “speak,” and used them as data. The AI system’s prediction held true; scientists found the codas held a nearly identical use in whale communication as vowels do in human language.

“If our findings are correct, it means that the communication of sperm whales is much more complex than previously thought,” the authors from the University of California, Berkeley concluded.

At the WEF in 2023, scientific efforts regarding AI and animal language were on display. The nonprofit Earth Species Project, whose sole purpose is to decipher the language of different species using AI and recordings, marked the moment as monumental.

“We are on the cusp of applying the advances we are seeing in the development of AI for human language to animal communication,” said Katie Zacarian, the CEO and co-founder of Earth Species Project. “With this process, we anticipate that we are moving rapidly toward a world in which two-way communication with another species is likely.”

The Earth Species Project works with 40 biologists and institutions worldwide. The organization uses AI to translate animal communication into language humans can understand.

“We believe that an understanding of non-human languages will transform our relationship with the rest of nature,” the nonprofit says on its website. “Along the way, we are building solutions that are supporting real conservation impact today.”

Researchers said that uncovering how other species speak in the animal kingdom will lead to conservation efforts, like preserving and restoring rainforests. Scientists theorize that listening to ecosystems and individual animals may help them determine the health of the natural environment.

During the WEF, scientists said that AI analysis of species communication has also been used to create “marine animal protection zones.”

Off the West Coast, studies of marine communication recordings, as well as shipping route data, helped develop “mobile marine protected areas.”

According to Stanford University, mobile marine protected areas are ocean sanctuaries with boundaries that may shift in space and time to protect wildlife. Natural events, like Gulf Stream, can impact a sanctuary’s movement. When the natural features of the ocean move, the protections move with them. GPS allows fishers find out instantly whether or not they’re in a protected area, even if the boundaries have shifted.